Magazine: Features

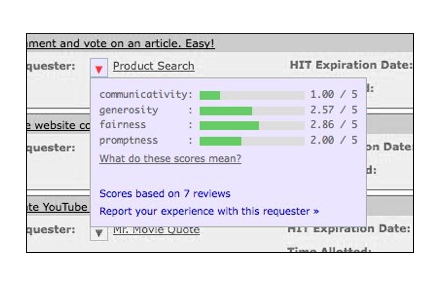

Ethics and tactics of professional crowdwork

Paid crowd workers are not just an API call---but all too often, they are treated like one.

Ethics and tactics of professional crowdwork

Full text also available in the ACM Digital Library as PDF | HTML | Digital Edition

Thank you for your interest in this article. This content is protected. You may log in with your ACM account or subscribe to access the full text.